LLMs definitely kills the trust in open source software, because now everything can be a vibe-coded mess and it’s sometimes hard to check.

yeah it’s to the point now where if I see emojis in the readme.md on the repo I just don’t even bother.

ttbomk, emojis are legal function-names in both Swift & Julia…

The Swift example was damned incomprehensible, & … well, it was Apple stuff, so making it look idiotic might have been some kind of cultural-exclusivity intention…

The Julia stuff, though, means that you can use Greek symbols, etc, for functions, & get things looking more like what they should…

Also, I think emojis are actually better than my all-text style, for communicating intonation/emotion ( I’m old: learned last century ), & maybe us old geezers ought to adapt a bit, to such things…

That does NOT mean that cartoon “code” is good-enough, whether it’s cartoonish in plaintext or in emojis, though…

I’m just trying to keep the cultural-prejudice & the code-quality being distinct-categories of judgement, you know?

( & cultural-prejudice is an actual thing, though it’s usually called “religious wars”, isn’t it, in geekdom? )

_ /\ _

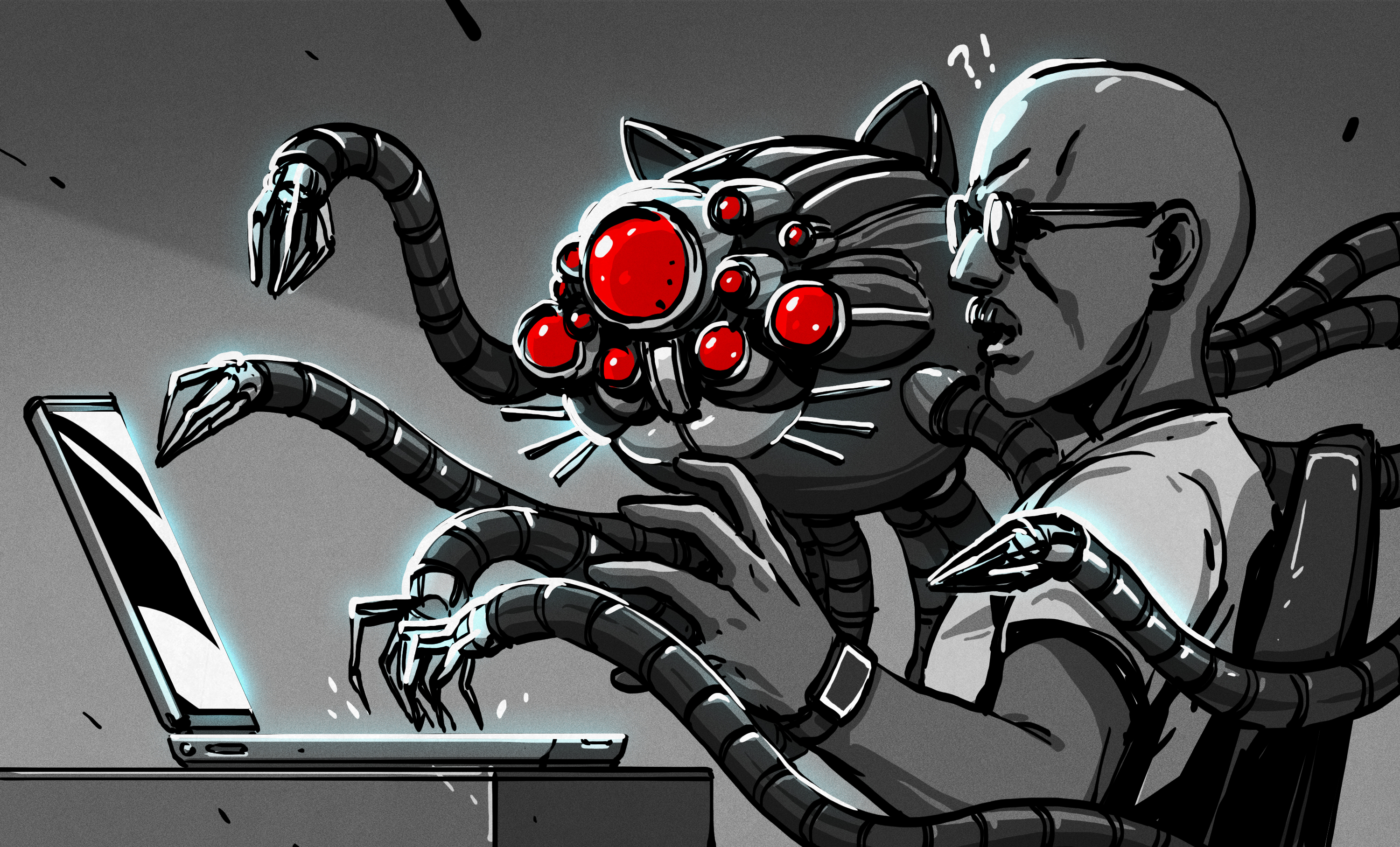

Vibe coding is a black hole. I’ve had some colleagues try and pass stuff off.

What I’m learning about what matters is that the code itself is secondary to the understanding you develop by creating the code. You don’t create the code? You don’t develop the understanding. Without the understanding, there is nothing.

Yes. And using the LLM to generate then developing the requisite understanding and making it maintainable is slower than just writing it in the first place. And that effect compounds with repetition.

TheRegister had an article, a year or 2 ago, about using AI in the opposite way: instead of creating the code, someone was using it to discover security-problems in it, & they said it was really useful for that, & most of its identified things, including some codebase which was sending private information off to some internet-server, which really are problems.

I wonder if using LLM’s as editors, instead of writers, would be better-use for the things?

_ /\ _